VSAN 6.0 Part 10 – 10% cache recommendation for AF-VSAN

With the release of VSAN 6.0, and the new all-flash configuration (AF-VSAN), I have received a number of queries around our 10% cache recommendation. The main query is, since AF-VSAN no longer requires a read cache, can we get away with a smaller write cache/buffer size?

With the release of VSAN 6.0, and the new all-flash configuration (AF-VSAN), I have received a number of queries around our 10% cache recommendation. The main query is, since AF-VSAN no longer requires a read cache, can we get away with a smaller write cache/buffer size?

Before getting into the cache sizing, it is probably worth beginning this post with an explanation about the caching algorithm changes between version 5.5 and 6.0. In VSAN 5.5, which came as a hybrid configuration only with a mixture of flash and spinning disk, cache behaved as both a write buffer (30%) and read cache (70%). If a read request was not satisfied by the cache, in other words there was a read cache miss, then the data block was retrieved from the capacity layer. This was an expensive operation, especially in terms of latency, so the guideline was to keep your working set in cache as much as possible. Since the majority of virtualized applications have a working set somewhere in the region of 10%, this was where the cache size recommendation of 10% came from. With hybrid, there is regular destaging of data blocks from write cache to spinning disk. This is a proximal algorithm, which looks to destage data blocks that are contiguous (adjacent to one another). This speeds up the destaging operations.

Now lets turn our attention to the new all-flash model, where the cache layer AND the capacity layer are both made up from flash devices. Since the capacity layer is also flash, if there is a read cache miss, fetching the data block from the capacity layer is not going to be as expensive as a hybrid solution which uses spinning disk. Instead, it is actually a very quick (typically sub-millisecond) operation. So really we don’t need to have a read cache in AF-VSAN since the capacity layer can handle reads effectively.

However AF-VSAN still has a write cache, and all VM writes hit this cache device. The major algorithm change, apart from there being no read cache, is how the write cache is used. The write cache is now used to hold “hot” blocks of data (data that is in a state of change). Only when the blocks become “cold” (no longer updated/written) are they are moved to the capacity layer.

In version 6.0, we continue to have a hybrid model, but there is also the all-flash model. The hybrid model continues to have the 10% recommendation based on the reasons outlined above. But then so does the AF-VSAN model. The reason will become clear shortly. First there are a number of flash terms to familiarize yourself with, especially around the endurance and longevity of flash devices.

- Tier1 – the caching layer in an AF-VSAN

- Tier2 – the capacity layer in an AF-VSAN

- DWPD – (Full) Drive Writes Per Day endurance rating on SSDs

- Write Amplification (WA) – More data is written due to choosing to use new cells rather than erase and rewrite existing cell for written data, leaving old copies of data to be cleaned up via garbage collection. This is accounted for in the endurance specifications of the device.

- VSAN Write Amplification (VWA) – A write operation from a workload results in some additional writes in the SSD because of VSAN’s metadata. For example, for a bunch of blocks written in the Physical Log, we write a record in the Logical Log.

- Overwrite Ratio – The average number of times a “hot” block is written in tier1 before becomes “cold” and moved to tier2

In a nutshell, the objective behind the 10% tier1 vs tier2 flash sizing recommendation is to try to have both tiers wear out around the same time. This means that the write ratio should match the endurance ratio. What we aim for is to have the two tiers have the same “life time” or LT. We can write this as the life time of tier should be equal to the life time of tier2:

- LT1 = LT2

The “life time” of a device can be rewritten as “Total TB written / TB written per Day”. Another way of writing this is:

- TW1/TWPD1 = TW2/TWPD2

TB written Per Day (TWPD) can be calculated as “SSD Size * DWPD” where DWPD is full drive writes per day. If we add this calculation to tier1 and tier2 respectively:

- TWPD1 = (SSDSize1 * DWPD1)

- TWPD2 = (SSDSize2 * DWPD2)

And to keep the objective of having both tiers wear out at the same time, we get:

- TW1/(SSDSize1 * DWPD1) = TW2/(SSDSize2 * DWPD2)

To write this another way, focusing on Total TB written:

- TW1/TW2 = (SSDSize1 * DWPD1) / (SSDSize2 * DWPD2)

To write this another way, focusing on capacity:

- SSDSize1/SSDSize2 = (TW1/TW2) * (DWPD2/DWPD1)

So now we have the lifespan of the SSDs based on their capacities. Let’s now take a working example using some Intel SSDs and see how this works out. Lets take the Intel S3700 for tier1 and S3500 for tier2. The ratings are as follows:

- DWPD1 for Intel S3700 = 10

- DWPD2 for Intel S3500 = 0.3

- DWPD2/DWPD1 = 0.3/10 = 1/30

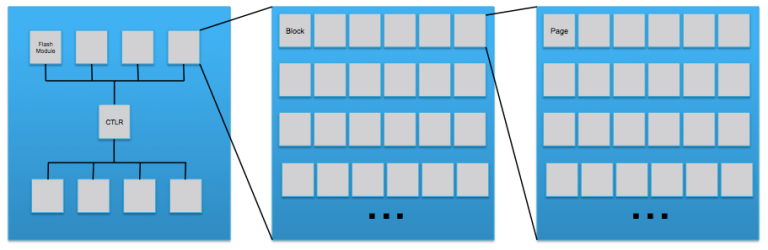

The next step in the calculation is to factor in a VSAN Write Amplification (VWA) of the caching layer, which is the TW1/TW2 part of the equation. This VWA value is different to normal Write Amplification (WA) on SSD devices. First, lets discuss what Write Amplification (WA) actually is, to distinguish it from what we are calling VWA. Let’s take a look at the layout of a typical SSD, which shows the flash modules, the blocks and then the pages where data is stored:

Next, we will take a look at how data is written to the SSD. This will explain the concept of write amplification. In this first step below, 4 pages (A, B, C & D) are written to pages in block 1.

In this next step, 4 new pages (E, F, G & H) are written to block 1 and original 4 pages (A-D) on block 1 are updated with new data. A-D updates are written to new pages in the block, leaving stale data in the original A-D pages. This is Write Amplification (WA).

In this next step, 4 new pages (E, F, G & H) are written to block 1 and original 4 pages (A-D) on block 1 are updated with new data. A-D updates are written to new pages in the block, leaving stale data in the original A-D pages. This is Write Amplification (WA).

To reuse these original (A-D) pages, they must be erased, but erasure is per block. Therefore active data is read from block 1 and rewritten to block 2. Block 1 is now erased, and shown in step 3 below. This block erasing is known as garbage collection.

To reuse these original (A-D) pages, they must be erased, but erasure is per block. Therefore active data is read from block 1 and rewritten to block 2. Block 1 is now erased, and shown in step 3 below. This block erasing is known as garbage collection.

This Write Amplification (WA) is already accounted for in the endurance specifications of the device and does not need to factor into our calculations. In fact, with the log structures used by Virtual SAN, a very benign workload is imposed on the flash device, mitigating WA as much as possible.

This Write Amplification (WA) is already accounted for in the endurance specifications of the device and does not need to factor into our calculations. In fact, with the log structures used by Virtual SAN, a very benign workload is imposed on the flash device, mitigating WA as much as possible.

Instead, we need to factor in the VSAN Write Amplification (VWA) in the caching layer. As mentioned earlier, this is about the fact that a write operation from a workload results in some additional writes in the SSD because of VSAN’s metadata. For example, when blocks are written to the Physical Log (PLog), VSAN also writes a record in the Logical Log (LLog). We are using a VWA value in the cache layer of 1.5. We are doing some “future proofing” here with this value as we are also considering dedupe and checksum in this calculation. The VSAN Write Amplification value is very dependent on the I/O patterns. In general, the larger the user data I/O, the smaller the relative size of the metadata, and therefore the smaller the VWA in terms of endurance impact. However VSAN strives to keep it below 2, and 1.5 is a reasonable assumption. Currently, since we don’t have dedupe or software checksum, we could choose a different VWA value. But as I mentioned, we are using 1.5 as a way of “future proofing” the calculation. This will give us a TW1/TW2 = (1.5 / 1) = 1.5. Returning to the previous calculation, we now have the following cache to capacity ratio:

- SSDSize1/SSDSize2 = (TW1/TW2) * (DWPD2/DWPD1)

- SSDSize1/SSDSize2 = (1.5) * (1 / 30)

- SSDSize1/SSDSize2 = 1.5 / 30 = 5%

This means for an all-flash Virtual SAN configuration where we wish for the life time of the cache layer and the capacity layer to be pretty similar, we should deploy a configuration that has at least 5% cache when compared to the capacity size. However, this is the raw ratio at the disk group level. But we have not yet factored in the Overwrite Ratio (OR) which is the number of times that a block is overwritten in tier1 before we move it from the tier1 cache layer to the tier2 capacity layer, as shown in this diagram:

Written another way, this is how often a block in tier1 is overwritten before it goes “cold” and is moved to tier2. Remember that we have a new caching algorithm in AF-VSAN that tries to keep hot blocks in the tier1 cache layer.. For this calculation, we have chosen (somewhat arbitrarily) a value of 2. So with the raw ratio previously at 5%, now when we factor in OR, we arrive at the rule of thumb of 10% for flash caching in an AF-VSAN configuration. As we get more metrics around usage, etc, we may change this requirement in a future.

However, if you have better insight into your workload, additional, more granular calculations are possible:

However, if you have better insight into your workload, additional, more granular calculations are possible:

- If the first tier has really high endurance rating compared to the second tier, then a smaller first tier would be fine, and vice-verse.

- If you have a very active data-set, such as database, then you would expect to see a large overwrite ratio before the data is “cold” and moved to the second tier. In this case, you would need a much bigger ratio than 5% raw, to give you both the performance as well as better endurance protection of the first tier.

- If you know your IOPS requirement is quite low, but you want all-flash to give you a low latency variance, then neither the first nor the second tier SSDs are in danger of wearing out in a 5 year period. In this case, one can have a much smaller first tier flash device. VMware Horizon View may fall into this category.

- If the maximum IOPS is known or can be predicted somewhat reliably, customers can just calculate the cache tier1 size directly from the endurance requirement, after taking into account write amplification.

Hope this helps to clarify why we chose a 10% rule of thumb for the cache to capacity ratio in all-flash VSAN configurations. To recap, the objective is to try to have both tiers wear out around the same time. Note again that this is raw device capacities, since the calculations are based entirely on the relative endurance and capacities of the devices.

One Reply to “VSAN 6.0 Part 10 – 10% cache recommendation for AF-VSAN”

Comments are closed.